How can artificial intelligence (AI) models be trained across organizational boundaries without disclosing sensitive data or intellectual property?

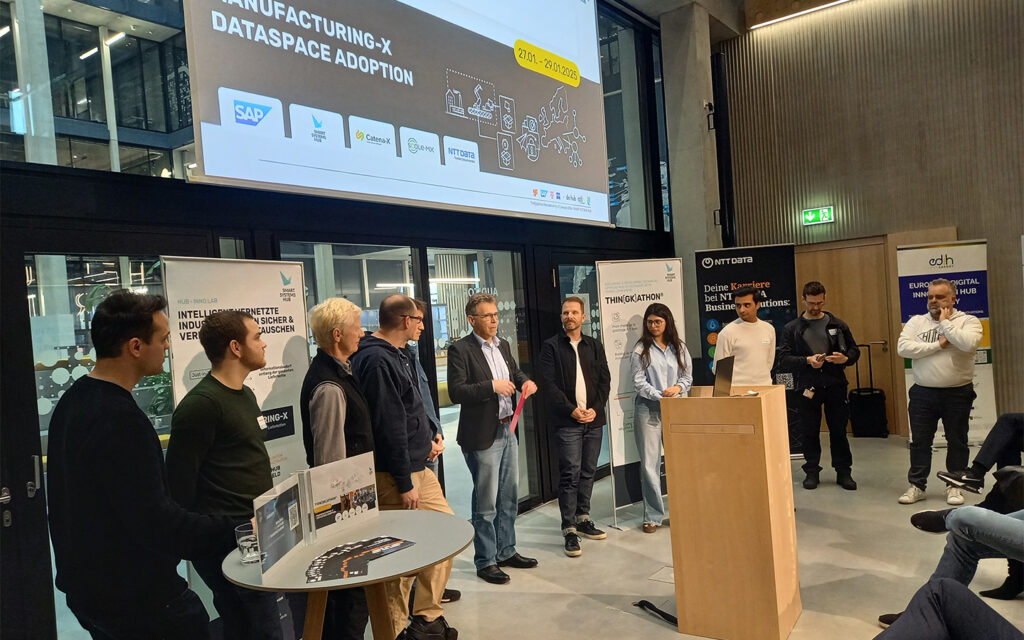

This question was at the core of one of the challenges addressed at the Thin[gk]athon “Manufacturing-X – Dataspace Adoption”, which took place from 27 to 29 January 2026 at the SAP Innovation Hub in Munich.

The co-innovation format initiated by the Smart Systems Hub brings together companies, research institutions, and technology partners to collaboratively prototype concrete industrial challenges within a short time frame. The Thin[gk]athon focused on data spaces in the context of Manufacturing-X and on the technical realization of cross-company, data-sovereign collaboration.

The Challenge: Joint AI Training While Preserving Data Sovereignty

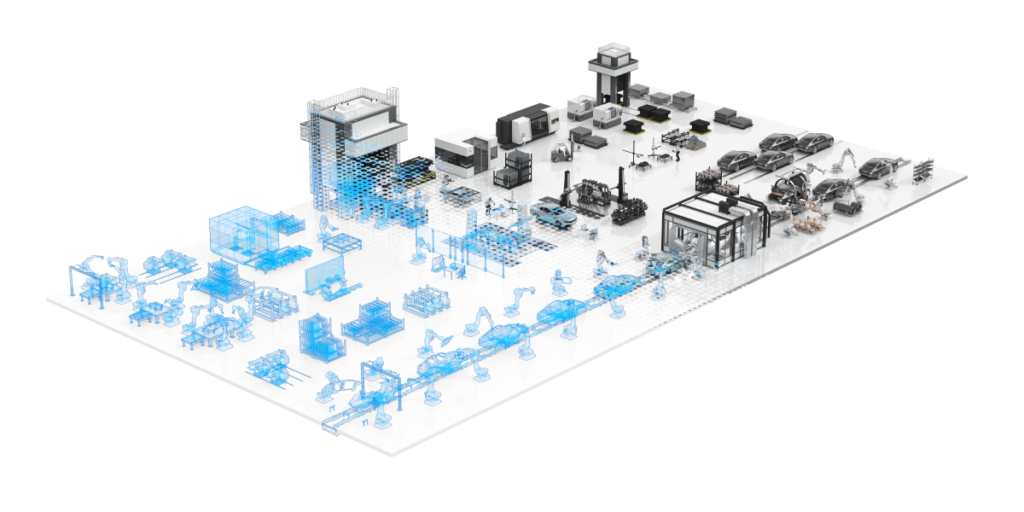

In industrial practice, data is typically distributed across different companies, production sites, and heterogeneous system landscapes. Centralizing such data is often neither feasible nor desirable, due to data protection requirements, intellectual property concerns, or competitive considerations.

At the same time, locally available datasets are frequently insufficient to develop robust and generalizable AI models. Rare fault patterns or specific operating conditions occur only sporadically and are therefore difficult to model when considered in isolation.

This creates a fundamental tension between the need for collaboration and the requirement for data sovereignty. Addressing this tension was the core objective of the challenge. The goal was to enable multiple companies to jointly train an AI model without exchanging training data and without establishing centralized data storage.

Technical Approach: Federated Learning within a Data Space Architecture

The selected solution approach is based on federated learning. Training data remains entirely with the respective participants and is processed locally within their existing IT infrastructures. Only model parameters, such as weights or update information, are exchanged between participants. These parameters do not allow direct inference of the underlying raw data.

The exchange of model artifacts is realized using the Eclipse Dataspace Connector (EDC). The EDC provides the technical foundation for policy-based, sovereign data exchange within a data space architecture. It enables the definition and enforcement of usage policies, access rights, and contractual rules governing data and artifact exchange.

Within three days, a complete end-to-end prototype was implemented. The prototype demonstrated the full workflow: local training at multiple participants, controlled exchange of model parameters via the data space, and aggregation into a shared global model. This provided a concrete proof of the technical feasibility of combining AI-based methods with Manufacturing-X-compliant data spaces.

Demonstration Based on an Industrial Use Case

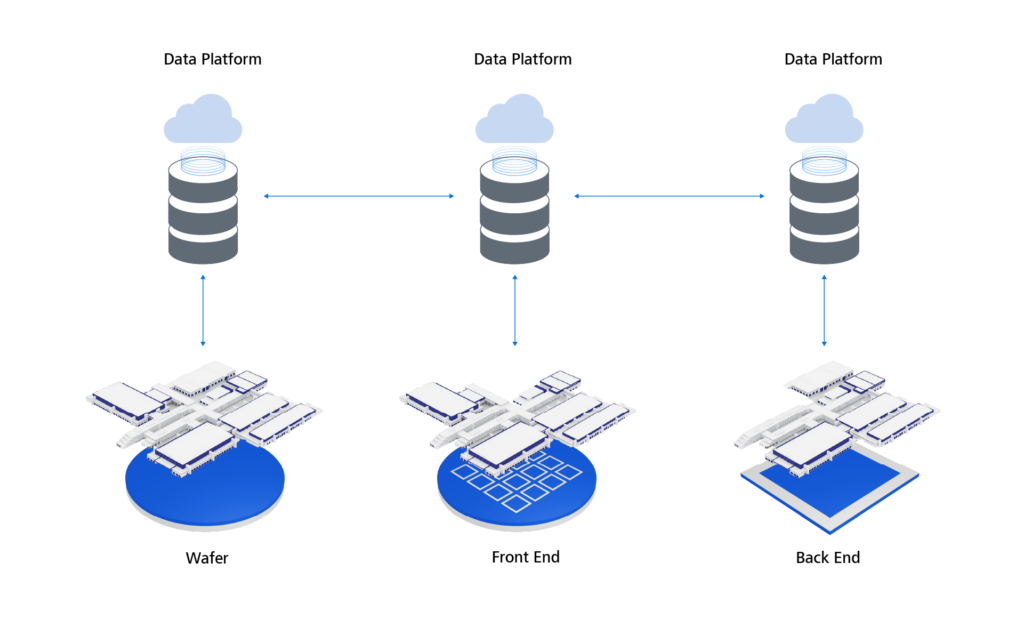

The technical approach was demonstrated using an example from rotor and turbine condition monitoring. Publicly available NASA datasets were used, representing different operating conditions and fault classes.

Individual turbines were modeled as separate training instances, each representing a distinct company within a federated learning setup. The respective datasets were deployed on different hardware environments to realistically reflect separated enterprise infrastructures.

Each instance performed local training on its own compute environment. After each training cycle, the resulting model parameters were made available via the Eclipse Dataspace Connector, aggregated centrally, and subsequently redistributed to the participating instances.

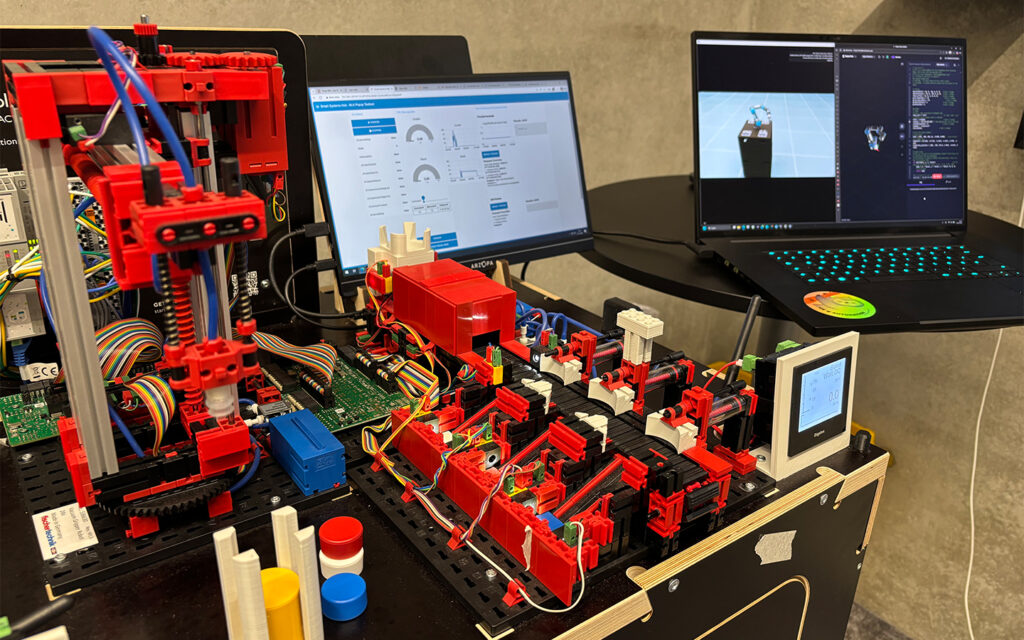

Another key element of the Thin[gk]athon was a live demonstration environment provided by the Smart Systems Hub using robotic systems. In a laboratory setup, it was shown how data from real industrial assets can be collected, integrated into a data space, and utilized for different application scenarios. In addition, data from a real test bench was integrated to demonstrate that the data exchange mechanism also functions with live operational data. While the data volume was not sufficient for training purposes, it effectively illustrated the end-to-end data flow, including integration into an application and a dedicated training dashboard.

Interdisciplinary Collaboration as a Key Success Factor

In parallel with this challenge, additional teams worked on further topics within the Manufacturing-X ecosystem, including:

- Supply chain transparency with a focus on interoperable data exchange

This challenge investigated how supply chain information can be structured and exchanged transparently across organizational boundaries. Topics included data models, access control concepts, and the integration of existing enterprise systems into a data space architecture.

- Cross-company calculation of Product Carbon Footprints (PCF)

This team focused on capturing and sharing emissions-relevant data to enable consistent and traceable CO₂ calculations across company boundaries, while protecting sensitive production information.

- Battery Product Passport and structured provision of product-related information

The focus was on enabling interoperable access to product data across the entire lifecycle. Architecture concepts for a data-space-compliant implementation were developed.

All teams were interdisciplinary, combining software engineers, data scientists, system architects, and domain experts. They were supported by mentors who provided guidance on both technical and methodological aspects.

On the final day, all teams presented their results to an expert jury from industry and technology. Within less than three days, functional prototypes, robust architecture concepts, and concrete demonstrators were created, clearly illustrating the potential of data-space-based collaboration.

Conclusion

The Thin[gk]athon “Manufacturing-X – Dataspace Adoption” demonstrated in a practical and tangible manner how data-space-enabled collaboration can be implemented in industrial contexts. The parallel challenges highlighted the significant potential of interoperable data spaces for future industrial value creation.

The clear organizational and methodological framework provided by the Smart Systems Hub enabled teams to focus quickly and effectively on their respective challenges. The SAP Innovation Hub offered an inspiring environment that facilitated direct exchange with experts and in-depth technical discussions. This was complemented by hands-on demonstrations providing concrete insights into real-world use cases.

For companies and professionals engaged in Manufacturing-X and industrial data spaces, the Thin[gk]athon offers a valuable opportunity to validate their own questions under realistic conditions and to prototype technical solutions. Further events are already planned by the Smart Systems Hub.

Further Information

Smart Systems Hub Dresden – Organization and co-innovation formats around Manufacturing-X

https://www.smart-systems-hub.de/en

SAP Innovation Center / SAP Innovation Hub – Host and supporter of the Thin[gk]athon

https://www.sap.com/germany/about/munich.html

Eclipse Dataspace Connector – Open-source technology for sovereign data exchange in data spaces

https://projects.eclipse.org/projects/technology.dataspaceconnector

Manufacturing-X – Initiative for interoperable industrial data spaces

https://factory-x.org/manufacturing-x/

![Definition of digital model, shadow and twin based on flows of information[2]](https://blogs.zeiss.com/digital-innovation/en/wp-content/uploads/sites/3/2023/06/Folie1-1024x442.jpeg)